Table of Contents

Introduction

The NVIDIA Vera Rubin platform, announced in early 2026, represents a massive shift in AI infrastructure by moving from individual chips to fully integrated, “co-designed” AI Factories. Named after the pioneering astronomer, the platform is engineered specifically for the era of agentic AI, where autonomous systems require continuous, long-context reasoning rather than just one-off tasks.

Key Infrastructure Innovations

The Vera Rubin platform introduces a unified system of six new chips that function as a single AI supercomputer:

- Vera CPU: An 88-core Arm-based processor designed specifically for data orchestration and “agentic reasoning,” moving away from general-purpose server roles.

- Rubin GPU: Features the 3rd-generation Transformer Engine and HBM4 memory, delivering up to 50 petaFLOPS of NVFP4 inference performance.

- Rubin NVL72 Rack: A flagship 100% liquid-cooled system that can train large models with 1/4 the GPUs of the previous Blackwell generation.

- Inference Context Memory Storage (ICMS): Powered by the BlueField-4 DPU, this new storage tier handles the massive “KV cache” needed for long-context AI agents, boosting throughput by 5x.

- Spectrum-6 Ethernet: Next-gen networking using co-packaged optics (CPO) to scale AI factories to million–GPU environments.

- Cooling Industry Upheaval: By supporting warm-water cooling (up to 45°C), Vera Rubin may eliminate the need for expensive industrial water chillers. This announcement reportedly wiped out billions in stock value for traditional cooling companies like Modine and Johnson Controls.

- Energy Efficiency: Despite using more raw power than its predecessor, the platform delivers a 10x improvement in performance per watt, which is critical as energy becomes the primary bottleneck for data center expansion.

- Digital Twin Infrastructure: The NVIDIA Omniverse DSX Blueprint allows developers to build physically accurate digital twins of gigawatt-scale “AI Factories” to simulate performance before physical construction.

- Agentic Computing: With the introduction of OpenClaw (an open-source orchestration layer) and NemoClaw (an enterprise agent platform), NVIDIA is positioning itself as the full-stack operating system for autonomous AI agents.

Economic and Market Impact

- Pricing: Estimated at $3.5 million to $4.0 million per rack, a roughly 25% increase over the Blackwell generation.

- Production: Volume production is currently underway, with shipping expected to begin in the second half of 2026.

- Adoption: Major cloud providers including AWS, Google Cloud, Microsoft Azure, and Oracle have already committed to deploying Rubin-based systems.

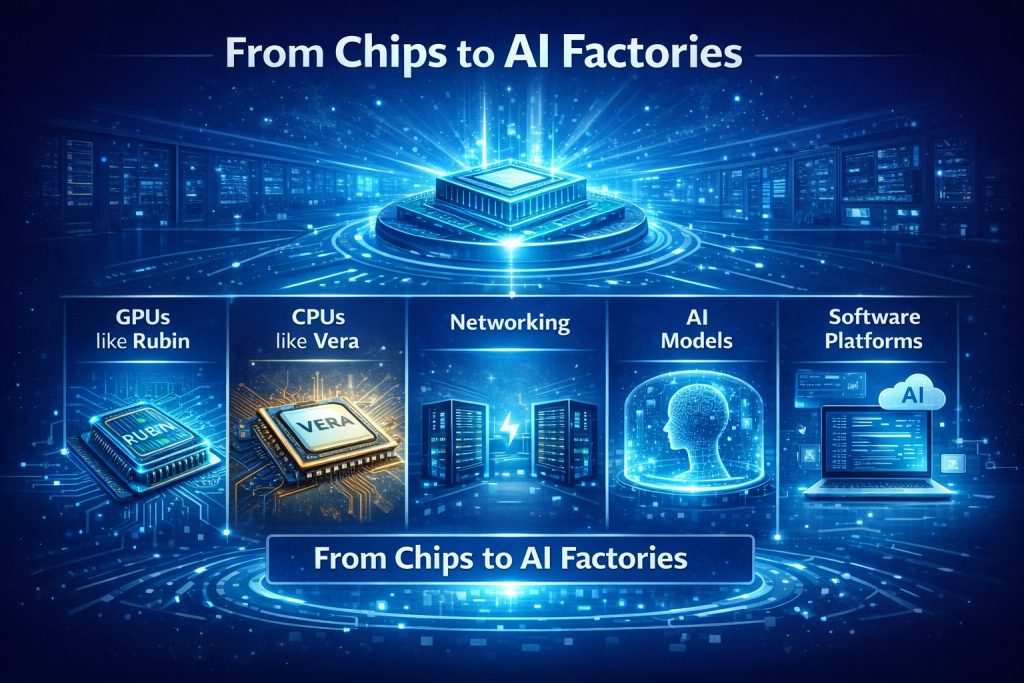

From Chips to AI Factories

One of the most important ideas introduced at GTC 2026 is the concept of AI factories, and at the center of these AI factories is the Vera Rubin architecture. In the past, companies built data centers to store data and run applications.

Now, with Vera CPUs and Rubin GPUs working together, companies are building AI factory infrastructure that produces intelligence at scale.

A Vera Rubin–powered AI factory includes:

- Rubin GPUs for AI training and inference

- Vera CPUs for data processing and orchestration

- High-speed networking for real-time data movement

- AI models for prediction and automation

- Software platforms for deploying and managing AI systems

These factories don’t produce physical products; they produce intelligence at scale, including:

- Predictions

- Automation

- Decisions

- Real-time insights

For example, a Vera Rubin AI factory can be used in different industries:

- Finance: Detect fraud in real time

- Logistics: Optimize routes and supply chains

- Healthcare: Analyze medical images and patient data

- Manufacturing: Run robots and automate quality control

This represents a completely new way of thinking about IT infrastructure where Vera Rubin systems don’t just run software, they power decision-making across the entire business.

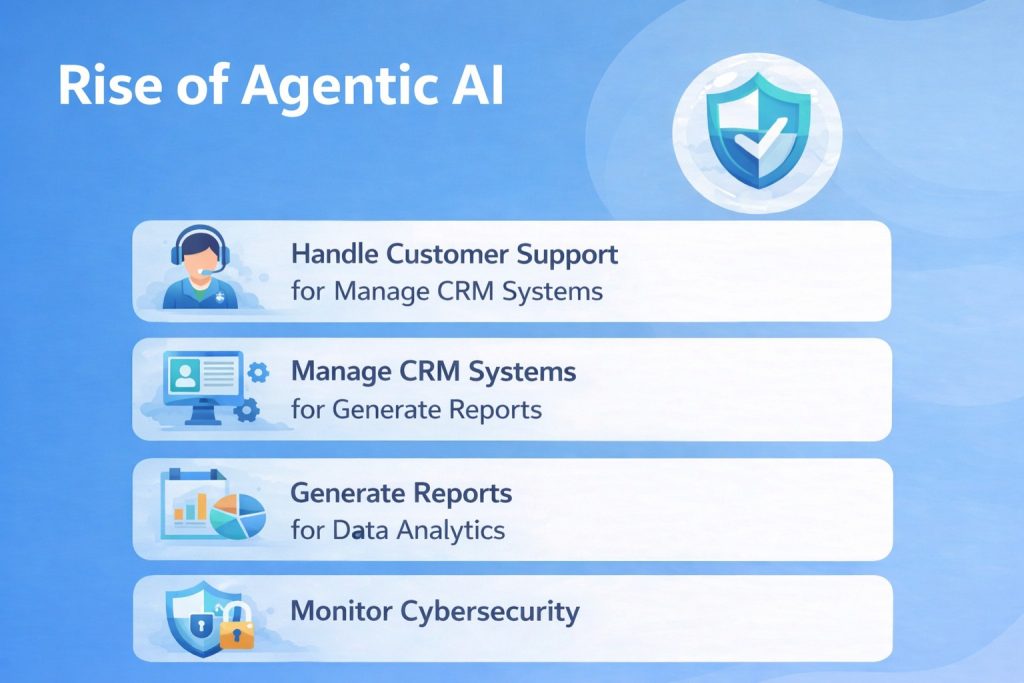

NeMoClaw: The Rise of Agentic AI

Another major theme from GTC 2026 is Agentic AI, AI systems that don’t just respond to prompts but take actions. With the introduction of Vera Rubin, NVIDIA also unveiled Agent OS (NeMoClaw), an operating system designed specifically for AI agents.

This is significant because it signals a future where companies will deploy AI agents powered by the Vera Rubin architecture in the same way they deploy software today. In the near future, enterprises may use AI agents, driven by Vera Rubin’s powerful infrastructure, to:

- Automate real-time decision-making processes

- Optimize supply chains in real-time

- Power autonomous vehicles with real-time data processing

- Manage customer service through advanced AI assistants

- Enhance fraud detection systems with real-time inference capabilities

With Vera Rubin’s combination of Rubin GPUs, Vera CPUs, and high-speed networking, the deployment of Agentic AI will be faster, more efficient, and capable of handling complex, real-world tasks across industries.

Vera Rubin: Revolutionizing AI Computing and the Future of Data Centers

At the center of NVIDIA’s GTC 2026 announcements is the Vera Rubin architecture, the next-generation AI computing platform. This platform combines:

- Rubin GPUs

- Vera CPUs

- High-speed networking

- AI inference systems

- Rack-scale computing

- Energy-efficient design

The most important shift here is the focus on inference, not just training. In the past, companies focused on training AI models. In the future, companies will spend more money running AI models because AI will be used in real-time business operations. Inference will power:

- AI assistants

- Recommendation engines

- Fraud detection

- Robotics

- Autonomous vehicles

How Data Centers Are Evolving for AI with Vera Rubin

With the introduction of the Vera Rubin architecture, AI data centers are evolving to meet the demands of next-generation AI computing. These data centers are now designed for:

- Massive GPU clusters powered by Rubin GPUs

- High-speed networking integrated with Vera CPUs

- Real-time inference capabilities

- AI model training, accelerated by Vera Rubin’s architecture

This shift in design reflects why companies like Amazon, Microsoft, and Google are investing billions into AI infrastructure.

With Vera Rubin at the core, data centers are transforming into AI production facilities, enabling real-time AI deployment and accelerating innovations across industries.

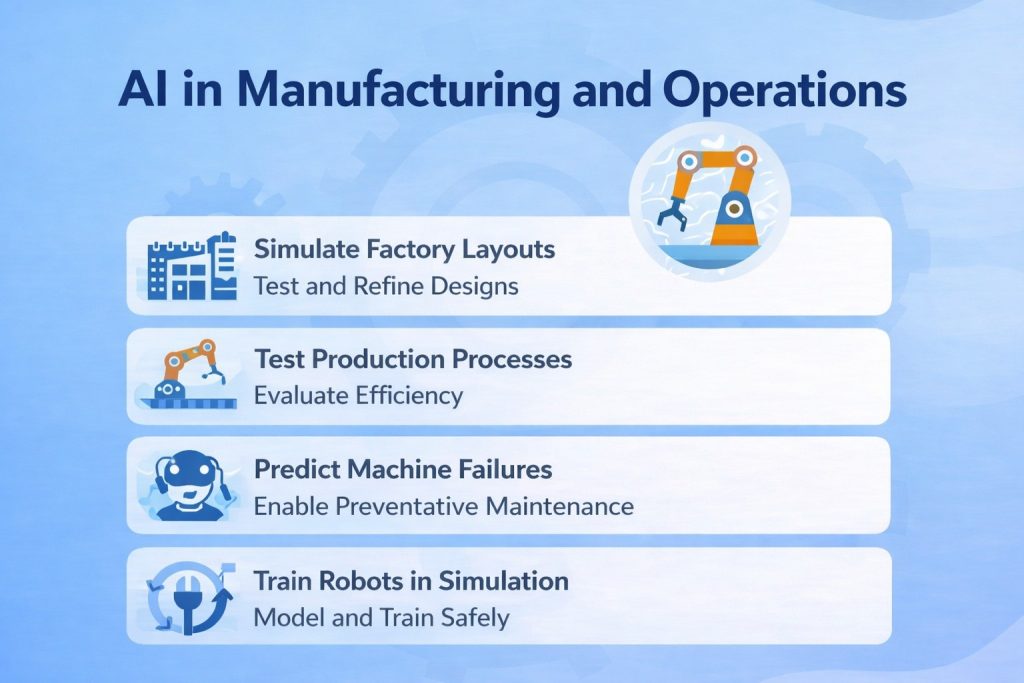

AI in Manufacturing and Operations

One of the industries most impacted by this shift is manufacturing. With AI and simulation platforms like DSX Air, companies can now create digital twins, virtual versions of factories, machines, and supply chains.

This allows businesses to:

- Simulate factory layouts

- Test production processes

- Predict machine failures

- Train robots in simulation

- Optimize energy usage

This means companies can test changes in a virtual environment before implementing them in the real world, saving millions of dollars.

A Trillion-Dollar Infrastructure Shift

What makes GTC 2026 so important is not just the technology but the scale of investment, particularly in the context of Vera Rubin. We are entering a trillion-dollar AI infrastructure cycle that includes:

- Vera Rubin-powered GPUs

- Data centers optimized with Vera Rubin’s AI architecture

- High-speed networking integrated with Vera CPUs

- Robotics fueled by Vera Rubin’s AI inference capabilities

- AI software designed to leverage Vera Rubin’s processing power

- Simulation platforms accelerated by Vera Rubin’s full-stack AI infrastructure

This is similar to previous infrastructure revolutions:

- Railroads

- Electricity

- Highways

- The internet

AI infrastructure, driven by Vera Rubin, is the next major economic wave, transforming industries and unlocking new levels of efficiency, scalability, and innovation.

The Rise of Sovereign AI

Another important concept discussed at GTC 2026 is Sovereign AI, where countries build their own AI infrastructure, powered by the Vera Rubin architecture, to control their data, models, and computing power.

This is important for:

- National security, with Vera Rubin enabling secure and localized AI systems

- Economic competitiveness, as countries leverage Vera Rubin’s AI infrastructure for global advantage

- Data privacy, ensuring sensitive data is processed within national borders using Vera Rubin-powered data centers

- AI regulation, where governments will oversee the deployment of AI agents and models built on Vera Rubin’s infrastructure

- Local innovation, fostering AI advancements tailored to specific regional needs, driven by Vera Rubin’s capabilities

This means AI infrastructure will not just be built by tech companies, but also by governments, with Vera Rubin at the core of these developments.

For enterprises, this means AI strategy will increasingly involve where data is stored, where AI runs, and which infrastructure like Vera Rubin is used to support AI operations.

Real-World Case Studies

Hewlett Packard Enterprise (HPE)

HPE expanded its HPE Private Cloud AI offering, co‑engineered with NVIDIA technology. The enhanced platform integrates NVIDIA’s new Vera Rubin architecture, enabling enterprises to scale up to 128 GPUs in a single AI deployment.

- This makes it easier for businesses to run large‑scale inferencing and multi‑model AI workloads without sacrificing performance.

- Organizations that need consistently high performance for AI tasks can now deploy fully managed AI clouds with predictable performance and scalability.

- HPE’s focus on enterprise air‑gapped and regulated deployments helps sectors like finance and government adopt AI securely.

- GTC 2026 accelerated HPE’s ability to deliver production‑ready AI infrastructure for enterprises, as enterprises shift from experimentation to full deployment.

Lenovo

Lenovo introduced new AI‑ready hardware, including the ThinkPad P14s Gen 7 mobile workstation and hybrid AI servers (ThinkSystem and ThinkEdge), built around NVIDIA RTX Blackwell GPUs. These systems support both on‑premise inference and model training workloads.

- AI teams can now conduct serious development and testing outside of the data center, and easily scale workloads back into enterprise infrastructure as projects mature.

- The hybrid setup helps enterprises bridge the gap between edge use and centralized AI processing.

- Lenovo’s advancements illustrate how infrastructure vendors are enabling flexible AI deployments that follow business needs from pilot to production.

Google Cloud

As part of the broader NVIDIA ecosystem at GTC 2026, Google Cloud showcased how its AI platform leverages NVIDIA’s infrastructure to deliver real‑time AI services. This includes advanced inference capabilities for conversational agents and automation workflows.

- Customers across sectors, from retail to enterprise support, can deploy AI models that interact in real time with users and backend systems.

- The collaboration also supports the rapid deployment of agentic and decision‑making AI workloads.

- Google Cloud’s work at GTC underscores a shift from isolated AI experiments toward integrated services that sit directly in enterprise operations.

NTT DATA

At the conference, NTT DATA announced its use of NVIDIA’s full‑stack AI infrastructure to build enterprise AI factories and turnkey deployments that combine data, compute, models, and workflows into standardized systems.

- Enterprises adopting NTT DATA’s AI factory model can deploy production‑ready AI faster, with built‑in governance, performance monitoring, and scalable infrastructure.

- The result is reduced time‑to‑value and more predictable outcomes for AI initiatives.

- NTT DATA’s approach highlights how consistent infrastructure standards help companies go beyond proof‑of‑concept work.

CoreWeave

While not a direct vendor partner announcement, CoreWeave’s ongoing expansion of GPU cloud infrastructure aligns with the NVIDIA ecosystem’s push at GTC. Its platforms increasingly support the latest NVIDIA GPUs and technologies designed for inference and agent‑based AI workloads.

- CoreWeave also serves as a foundation for enterprises requiring on‑demand AI infrastructure at scale.

- Enterprises that don’t own on‑premise AI infrastructure can leverage CoreWeave’s cloud platforms to access powerful AI resources.

- This reduces capital expenses and enables rapid scaling for training and inference jobs.

- CoreWeave’s role in the broader ecosystem reflects how third‑party cloud providers are crucial partners in operationalizing AI infrastructure for global enterprises.

Designing the Future with Simulation

One of the most fascinating parts of GTC 2026 is how simulation is being used to design the future. Just like cloud computing created the biggest tech companies of the last decade, AI infrastructure may create the biggest companies of the next decade.

AI is no longer software. AI is infrastructure. And GTC 2026 may be remembered as the moment this shift became clear to the world

Companies can now simulate:

- Factories

- Warehouses

- Traffic systems

- Robots

- Supply chains

- Power grids

- Autonomous vehicles

Simulation reduces risk, lowers costs, and accelerates innovation. In the future, companies will build everything in simulation before building it in the real world.

Deepak Wadhwani has over 20 years experience in software/wireless technologies. He has worked with Fortune 500 companies including Intuit, ESRI, Qualcomm, Sprint, Verizon, Vodafone, Nortel, Microsoft and Oracle in over 60 countries. Deepak has worked on Internet marketing projects in San Diego, Los Angeles, Orange Country, Denver, Nashville, Kansas City, New York, San Francisco and Huntsville. Deepak has been a founder of technology Startups for one of the first Cityguides, yellow pages online and web based enterprise solutions. He is an internet marketing and technology expert & co-founder for a San Diego Internet marketing company.